Marketing is a combination of art and science. The best marketing campaigns involve plenty of creativity, but they also rely on data and testing to ensure their success. That’s where your email AB testing tools come in!

AB testing is essential if you want to improve and optimise your email campaigns to suit your audience and their preferences. So we’re going to explore the basics of email AB testing, and how you can test different elements in your emails to see if they are hot or not!

What is AB testing in email marketing?

Email AB testing tools help you compare two email message versions to determine which elements perform better. AB testing gives marketers valuable insights into how subscribers interact with their emails. This allows them to make informed decisions based on data rather than guesswork. AB testing also helps marketers understand whether any changes in their email impacted performance over time.

AB testing tools are essential for any marketer looking to drive maximum engagement from their email list. With the help of these tools which we’ll touch on further, marketers can quickly identify what resonates best with their subscribers.

What email content should you AB test?

The most common email elements you can AB test include:

- Email subject line

- Call-to-action

- Sender name

- Email content

- Timing

By testing these elements, you can determine which versions lead to higher conversions, open rates and engagement. However, it’s crucial only to try one aspect at a time to check its impact on the overall email performance accurately.

AB testing subject lines

One of the most important elements you should be testing is your email subject line, after all, it is the first thing subscribers see. Approximately 50.39% of emails get opened within a 6-hour time frame, that’s why we recommend conducting your AB testing for the first 1-2 hours after sending.

In Vision6, we have our own AB testing tool built in to help you compare two subject lines. Our system automatically splits your audience into two testing groups, serving them a different subject line. The subject line that performs the best is what our system will serve to the rest of your contacts to help boost your open rates.

The goal is to create a subject line that piques the recipient’s interest and conveys the content of the email. Some companies prefer to maintain consistency in their subject lines so that recipients can easily recognise the emails in their inbox. On the other hand, others may choose to spice things up with an emoji based on the content of each email.

- Emojis vs No Emojis: Emojis have become increasingly popular recently and can help to increase open rates in some cases. Marketers can test subject lines with and without emojis to determine if they grab the recipient’s attention. It’s important to note that while emojis can be helpful, they may not always fit with the tone and message of the email, so testing is crucial.

- Personalisation: Personalise subject lines with the recipient’s name, location, or other relevant information. This increases the likelihood of the email being opened. Emails with customised subject lines result in a 50% increase in open rates. AB testing subject lines with personalisation provide insights about what appeals to the audience. For example, marketers can try a subject line that includes the recipient’s name. They can also test a subject line that includes a personalised message based on their previous interactions with the brand.

The from name

When you’re trying to figure out what type of email content should be AB tested, it’s crucial to think about the from name. This is the most eye-catching part of the email and can significantly affect its performance. You can use company names like “Vision6 Marketing Team” or personal contact information like “Kathy @Vision6”.

We recommend testing this variation over a couple of months, which will help you decide if one performs better than the other for maximum impact on your audience. A combination of the two might give you even better results. It never hurts to try something new!

Email images

Email images are a key element in creating visually appealing and engaging emails. Therefore, AB testing email images is vital to boost overall email performance.

By checking your Vision6 clickmaps tool you’ll be able to see what email elements attract the most clicks. Is it linked text, a call-to-action button or your images? If you find your images aren’t contributing to overall engagement you might want to consider experimenting with more interactive content.

You might be tempted to strip all images from your emails, after all, they can slow your load time. However, we recommend being cautious that your email doesn’t appear too content-heavy, as this can sometimes overwhelm your readers as soon as they open your email.

PRO TIP: Using conditional content for your images – We recommend using our conditional content feature to personalise images for your each of your subscribers. You can draw on your different data sets to show different images based on a subscriber’s preferences, gender or geo-location.

Email copy

The content of an email can significantly impact its success. Test different elements to understand what viewers prefer and maximise click-through rates.

Don’t flood your emails with all these changes at once, otherwise, you’re going to have a difficult time determining what’s working and what’s not. We recommend attacking one email component at a time. Our in-depth reporting insights provide the perfect email AB testing tool for you to easily pinpoint what people are clicking, down to what device they opened your email on.

There are a couple of things we recommend AB testing:

- Email copy length

This a highly debated topic amongst email marketers which is met with varying answers. Not to play the devil’s advocate but there’s no right answer. It depends entirely on the intention of your email. While many believe a lengthy email just forces readers to work harder, it may also be needed in some cases. For example, if you’re launching a new product, sometimes taking the time to cover all the benefits may be what’s needed to get them across the line.

- Personalisation

The beauty of email lies in its capacity to connect with your audience on a personalised level. By incorporating personalisation, you can strengthen the bond with your readers and inspire them to take action. Experiment with dynamic content using conditions, leveraging data from your CRM. This could include factors such as gender, age, location, interests, purchase history, or any other segment that you have created.

- Tone

Your emails should be a reflection of your brand’s personality, meaning as soon as that email hits your inbox your subscribers should know it’s you by your written voice. Try mixing it up a bit to see what works for your audience. But if you are working in humour, make sure you keep it tasteful and non-offensive.

Email call-to-actions

One of the most critical parts of any email marketing campaign is getting subscribers to take action. So, you must test the effectiveness of calls to action in your emails. This means trying the basics like size and colour, copywriting, and positioning on the page. For example, a less visible call to action may get fewer clicks. But if you place it in a relevant position, it’s likely to attract more attention from readers and, thus, more clicks.

Moreover, consumers prefer clarity over surprise when it comes to call-to-action. It is crucial to clearly communicate what they will receive if they take the desired action. A well-timed and relevant message demonstrates that you can provide genuine support.

Send times

Send times are a critical factor in determining the success of an email campaign. This includes:

- Day of the Week: Test sending emails on different days. For example, on weekdays versus weekends, check if there is a difference in open rates, clicks, and conversions.

- Time of Send: The time of day you send your emails can also affect their success. Test sending emails at different times, such as mornings, afternoons, and evenings, to conclude the best time to send them to your audience.

- Send Cadence: The frequency at which you send emails can also have an impact. Test several send cadences. For example, daily, weekly, or monthly, to determine your audience’s best email send frequency.

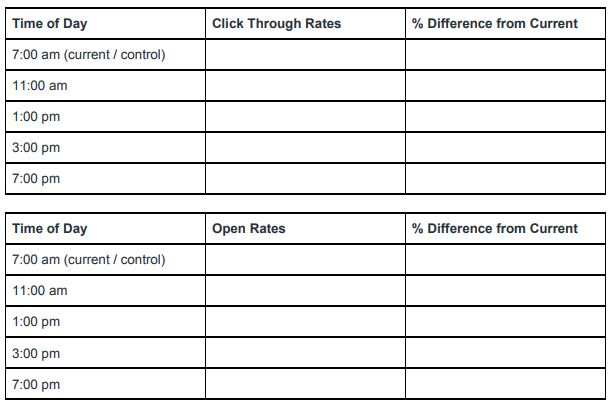

Keep a table of results over time so you can track which time yields the best open rates or click-through rates. For example:

Email AB testing tools

Here are some email AB testing tools that help you determine which elements of your email campaigns are most impactful. This allows you to optimise and improve results over time.

Vision6 AB testing tool

Use our platform to automatically AB test two subject lines on a sample of contacts from your desired list. You can use the winner (the one with the higher open rate) for the remaining contacts. All it takes is one push of a send button – let us do all the work while ensuring that the majority get engaging content tailored specifically for them!

Vision6 click maps

Looking to understand which content in your emails is most engaging? Vision6’s click maps reveal the popularity of each link, visually representing how many subscribers have clicked on it. Hover over a hotspot for detailed insights into its performance – giving you valuable data to help refine your email strategy!

Nifty images

Another platform that offers email AB testing tools is Nifty images, which can test the success of email images and conditional content in header images. It provides better results than traditional AB Split Tests. It offers the ability to test several variants, choose a winner based on a number of metrics, and view real-time statistics. With Nifty images, you can customise distribution, select a winning metric, and decide when to stop testing variants.

Optimizely

Take your email marketing game to the next level with Optimizely! This digital experience platform lets you amp up campaigns through automated emails and powerful AB testing. You try out different elements, like subject lines and header images. At the same time, target specific audiences – all during tracking results in real-time to maximise revenue. Unleash a whole new world of possibilities for digital engagement!

Vision6 litmus testing

While you send a test email to yourself, it only allows you to test its appearance in your email program. Vision6’s built-in Litmus testing feature will enable you to test your email across various devices, in multiple programs and with dark mode options.

Conclusion

Email marketing is a powerful tool for reaching customers. But it’s important to ensure your messages are well-received and effective. Optimise your email content with email AB testing tools like Vision6, Nifty Images, Optimizely, and Vision6 Litmus Testing. Follow these email AB testing best practices and continuous testing, and you can crack the code to successful email marketing. Take your productivity to the next level with our free email testing! Start your journey to hassle-free emailing now.